Simon Klenk*, David Bonello*, Lukas Koestler*, Daniel Cremers

arXiv 2022

Masked Event Modeling (MEM) is a self-supervised, BERT-inspired pretraining framework for unlabeled events from any event camera recording. The method outperforms the state-of-the-art on N-ImageNet, N-Cars, and N-Caltech101, increasing the object classification accuracy on N-ImageNet by 7.96%.

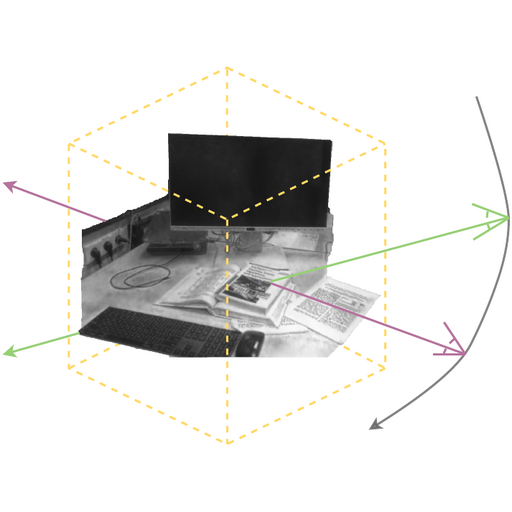

Simon Klenk, Lukas Koestler, Davide Scaramuzza, Daniel Cremers

RA-L 2023

E-NeRF shows how to estimate a neural radiance field (NeRF) from a single moving event camera or from an event camera in combination with a standard camera. The proposed method can recover NeRFs during very fast motion and in high dynamic range conditions, where frame-based approaches fail.

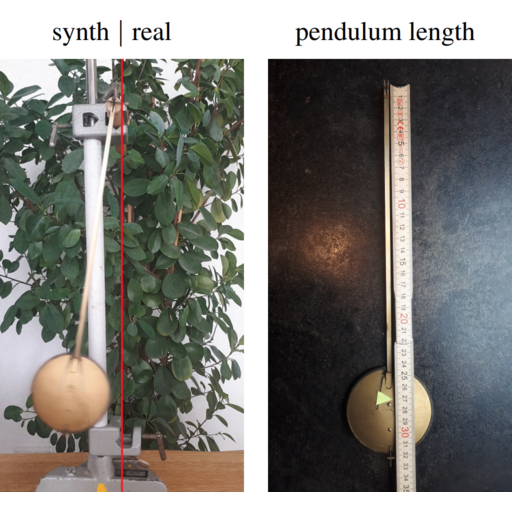

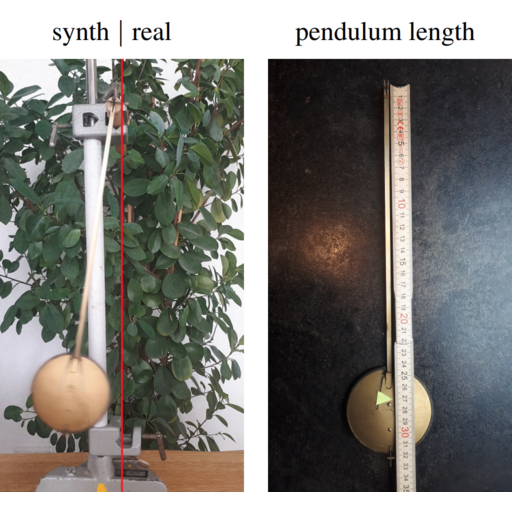

Florian Hofherr, Lukas Koestler, Florian Bernard, Daniel Cremers

WACV 2023

This work combines neural implicit representations for appearance modeling with neural ODEs for modelling physical phenomena to obtain a dynamic scene representation that can be identified directly from visual observations. The embedded neural ODE has a known parametric form that allows for the identification of interpretable physical parameters.

Lukas Koestler*, Daniel Grittner*, Michael Moeller, Daniel Cremers, Zorah Lähner

ECCV 2022

Intrinsic neural fields are a novel and versatile representation for functions on manifolds. They combine the advantages of neural fields with the spectral properties of the Laplace-Beltrami operator.

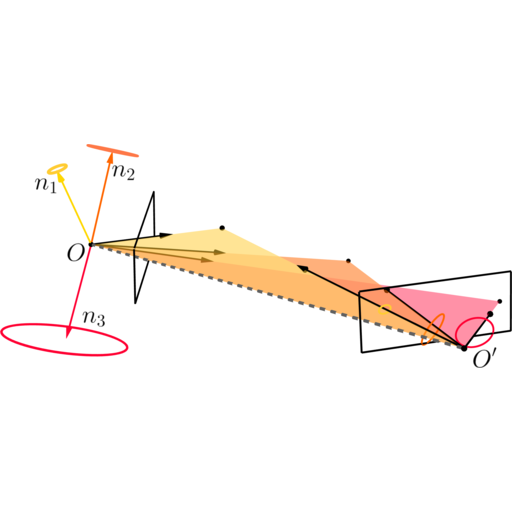

Dominik Muhle*, Lukas Koestler*, Nikolaus Demmel, Florian Bernard, Daniel Cremers

CVPR 2022

The probabilistic normal epipolar constraint (PNEC) extends the NEC by Kneip et. al. by accounting for anisotropic and inhomogeneous uncertainties in the feature positions, which yields more accurate rotation estimates.

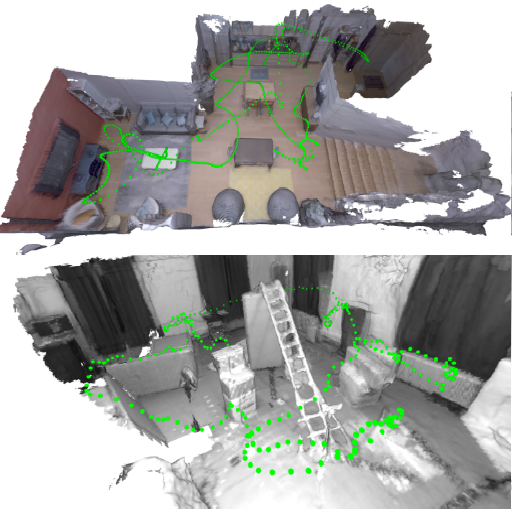

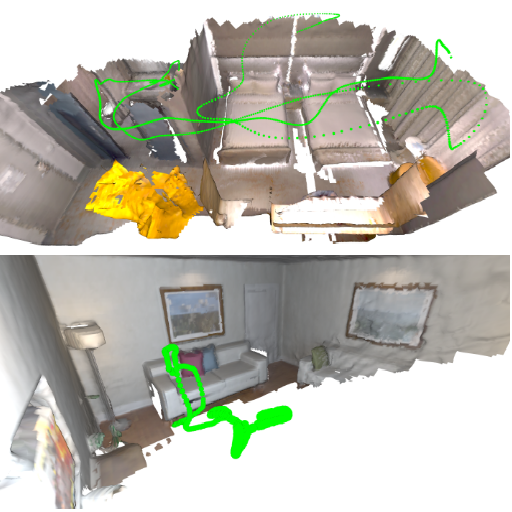

Lukas Koestler*, Nan Yang*, Niclas Zeller, Daniel Cremers

CoRL 2021 and 3DV 2021 Best Demo Award

TANDEM combines photometric tracking and deep multi-view stereo depth estimation into a monocular dense SLAM algorithm. Using depth maps rendered from the incrementally-built TSDF model improves tracking robustness.

Lukas Koestler, Nan Yang, Rui Wang, Daniel Cremers

GCPR 2020

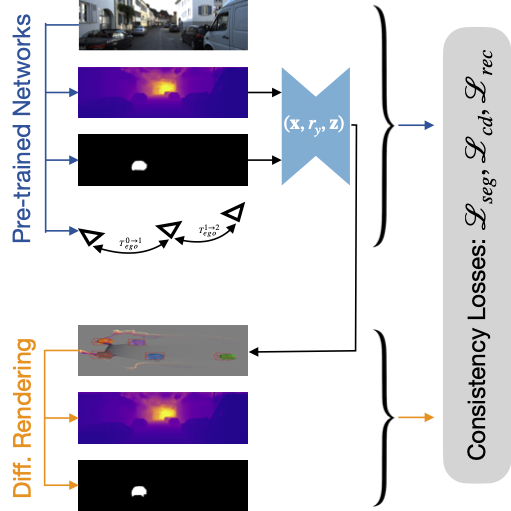

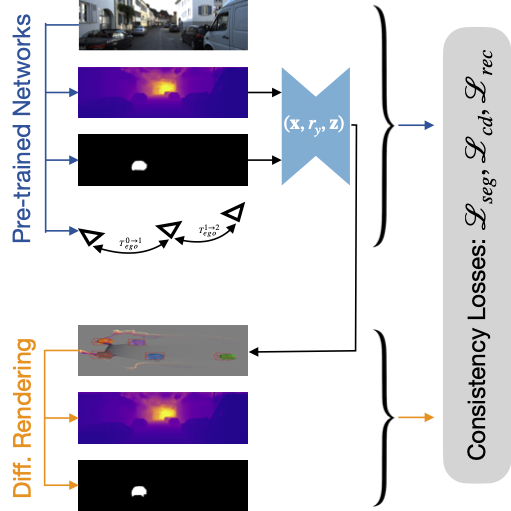

By predicting object meshes and employing differentiable rendering, we define loss functions based on depth maps, segmentation masks, and ego- and object-motion, which are generated by pre-trained, off-the-shelf networks.